The Senate voted down a bipartisan bill Wednesday aimed at stripping legal liability protections for artificial intelligence (AI) technology.

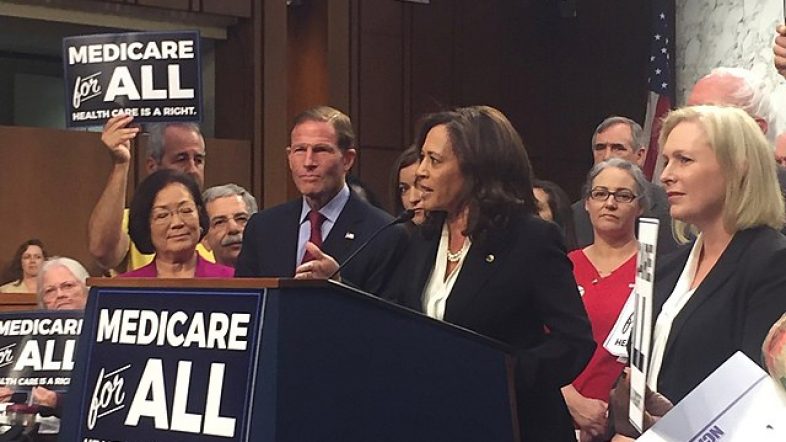

Republican Missouri Sen. Josh Hawley and Democratic Connecticut Sen. Richard Blumenthal first introduced their No Section 230 Immunity for AI Act in June and Hawley put it up for an unanimous consent vote on Wednesday. The bill would have eliminated Section 230 protections that currently grant tech platforms immunity from liability for the text and visual content their AI produces, enabling Americans to file lawsuits against them.

The bill would have had major implications on speech and innovation, experts previously told the Daily Caller News Foundation. Section 230 of the Communications Decency Act of 1996 asserts that internet companies cannot be held liable for third-party speech posted on their platforms.

The No Section 230 Immunity for AI Act defines generative AI as “an artificial intelligence system that is capable of generating novel text, video, images, audio, and other media based on prompts or other forms of data provided by a person.’’ Since it was an unanimous consent motion, it required no objections in order to pass, according to the Senate glossary.

The bill “would threaten freedom of expression, content moderation, and innovation,” a coalition, including technology and liberty advocacy groups, wrote to Senate Majority Leader Chuck Schumer of New York and Senate Minority Leader Mitch McConnell in a Monday letter. “Far from targeting any clear problem, the bill takes a sweeping, overly broad approach, preempting an important public policy debate without sufficient consideration of the complexities at hand.”

NetChoice, a group whose members include companies like Google and TikTok, says the definition is so vague and broad that it could apply to any actions computers take and even calculators.

“Lawmakers are misdirecting their anger at tech platforms when they should be focusing on how the government is trying to use its power to force companies to do the government’s bidding,” NetChoice’s Vice President & General Counsel Carl Szabo told the DCNF.

It could also crush American innovation, R Street Institute Senior Fellow Adam Thierer told the DCNF.

“The bill is troubling because it would actively undermine one of the legal foundations of the Digital Revolution, thus harming the ability of American innovators to be as innovative and globally competitive as they otherwise would be,” Thierer told the DCNF. “Section 230 is the secret sauce behind America’s success in digital markets globally. We shouldn’t abandon it lightly.”

Democratic Oregon Sen. Ron Wyden, one of the authors of Section 230, said it should not apply to AI in comments to The Washington Post in March.

“AI tools like ChatGPT, Stable Diffusion and others being rapidly integrated into popular digital services should not be protected by Section 230,” he told the Post. “And it isn’t a particularly close call … Section 230 is about protecting users and sites for hosting and organizing users’ speech” and it “has nothing to do with protecting companies from the consequences of their own actions and products.”

Hawley and Blumenthal have argued that providing Section 230 liability safeguards to AI would protect tech firms from accountability for the perceived harms of their products.

“We can’t make the same mistakes with generative AI as we did with Big Tech on Section 230,” Hawley stated. “When these new technologies harm innocent people, the companies must be held accountable. Victims deserve their day in court.”

“AI companies should be forced to take responsibility for business decisions as they’re developing products — without any Section 230 legal shield,” Blumenthal stated.

Jason Cohen on December 13, 2023